Retrieval-Augmented Generation (RAG) is an AI approach where a model first retrieves relevant external information, like documents or databases, and then uses it to generate accurate responses. It’s important because it makes AI more reliable, up-to-date, and context-aware, especially for enterprise applications that require precise, verifiable, and domain-specific answers.

Large language models are powerful, but on their own they have a structural limitation. They generate answers based on patterns learned during training, not by looking up the latest or most authoritative information at the moment a question is asked. That gap becomes a serious problem in enterprise AI.

A model may sound confident, yet still give an outdated, incomplete, or fabricated response when asked about a product update, an internal policy, a technical document, or a regulatory requirement.

Also read- NLP Applications in Healthcare, Finance, and E-commerce

What is Retrieval-Augmented Generation (RAG)?

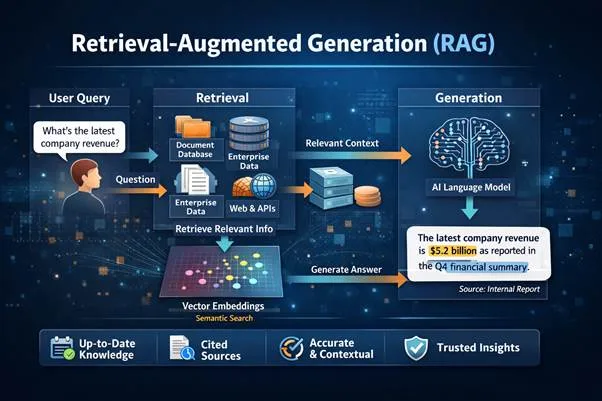

RAG is an architectural pattern that combines information retrieval with language generation. Instead of forcing the model to answer from memory alone, the system first retrieves relevant information from external sources such as documents, databases, internal knowledge bases, or APIs. That retrieved information is then supplied to the model as context so the answer is grounded in real source material.

This approach separates knowledge storage from reasoning. Enterprise information often resides in distributed systems like CRM platforms, ERP tools, and knowledge bases, where it is continuously updated. RAG allows the model to access this dynamic information at query time, rather than attempting to internalize it.

As a result, the model focuses on interpreting and synthesizing information, ensuring responses are not only coherent but also aligned with real, verifiable data.

How a RAG System Works Step by Step

Step 1. The user submits a query

The process begins with a natural language question such as:

- “What is the approval workflow for purchase requisitions?”

- “How does SAP handle invoice matching exceptions?”

- “What changed in the latest product release?”

The system first interprets the query. In more advanced implementations, this stage may include query rewriting, intent detection, entity extraction, or access control checks.

Step 2. The query is converted into a retrieval-ready form

To search effectively, the question must be transformed into a representation suitable for retrieval. This often involves embeddings, which are dense vector representations of text that capture semantic meaning. The user’s query is embedded and compared against embedded document chunks stored in a vector database.

Step 3. Relevant content is retrieved from external sources

The system then retrieves the most relevant passages, not necessarily the entire documents. This is an important detail. Most RAG systems do not pass full files to the model. Instead, they first split source material into chunks during ingestion. Those chunks may be paragraphs, sections, policy clauses, ticket summaries, or structured records.

Step 4. The system builds an augmented prompt

Once the most relevant passages are selected, the application constructs a prompt that combines:

- The original user question

- System instructions

- Retrieved context

- Optional citation formatting rules

- Guardrails such as “answer only from provided sources”

This is called the augmentation step in Retrieval-Augmented Generation. The language model is no longer answering in isolation. It is answering with evidence in context. A strong prompt design is critical here.

Step 5. The LLM generates a grounded response

The model then synthesizes an answer based on the supplied context. Depending on the application, the output may be:

- A direct answer

- A summarized explanation

- A comparison

- A workflow guide

- A troubleshooting response

- A draft email, ticket reply, or case summary

In high-trust systems, the model is instructed to avoid answering when the sources are insufficient. This is an important behavior.

Step 6. The system returns the answer with source traceability

One of RAG’s biggest strengths is transparency. Many implementations show the supporting passages, document names, hyperlinks, or citations used to generate the response.

This improves user trust and helps with governance, especially in legal, healthcare, financial, and compliance-heavy environments.

Also Read:- How AI Is Transforming Medical Imaging and Diagnostics

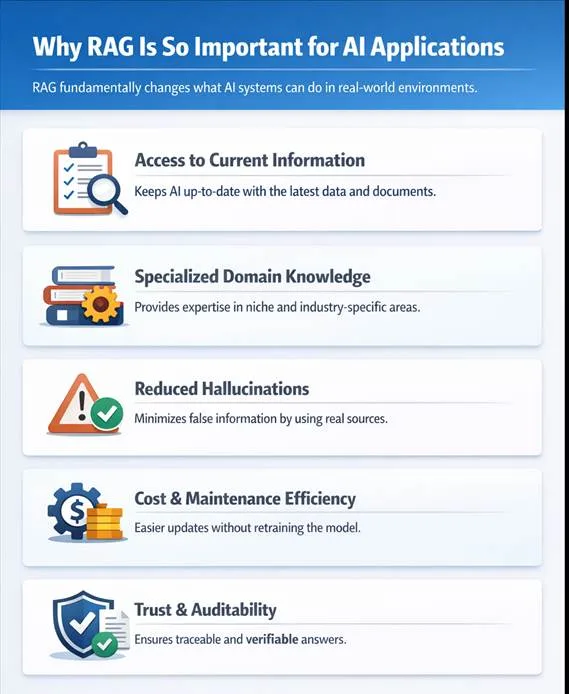

Why RAG Is So Important for AI Applications

RAG is important because it fundamentally changes what AI systems can do in real-world environments. Here are some examples:

1.) It gives AI access to current information

A base model has a training cutoff. It does not automatically know your latest pricing sheet, internal SOP, release documentation, or compliance update. RAG solves that by retrieving current information at query time.

2.) It makes AI useful for specialized domains

Generic language models are broad, but enterprise use cases are narrow and domain-heavy. A support assistant for SAP, Dynamics 365, insurance claims, or HR policy needs access to precise internal knowledge. RAG grounds the model in that domain without retraining the base model every time content changes.

3.) It reduces hallucination risk

RAG does not eliminate hallucinations completely, but it significantly reduces them by forcing the model to work from retrieved evidence. The model is less likely to invent unsupported details when its answer is constrained by real source material.

4.) It improves maintainability and cost efficiency

Updating a RAG system usually means updating documents, reindexing content, or refreshing connectors. That is far more practical than re-training or fine-tuning a model every time knowledge changes.

5.) It supports trust, auditability, and governance

In enterprise AI, being correct is not enough. Teams also need to know where the answer came from. RAG supports explainable AI by linking answers to sources, which is essential for audit trails and policy-sensitive workflows.

RAG is the Future of Context-Aware Enterprise AI

As AI systems evolve, RAG will become the backbone of intelligent enterprise applications, where models don’t just generate responses, but reason over live, trusted data. This will how organizations interact with knowledge, moving toward systems that are continuously updated, auditable, and decision-ready.

Stay tuned for more such expert insights on AI, enterprise architectures, and digital transformation

FAQs

1. Is RAG the same as fine-tuning?

RAG is not the same as fine-tuning, as fine-tuning trains a model to change its behavior, while RAG retrieves external knowledge in real time to improve response accuracy.

2. Can RAG handle structured data?

RAG can work with both structured data like databases and unstructured data like documents, enabling more comprehensive and context-rich responses.

3. Does RAG eliminate hallucinations?

RAG reduces hallucinations by grounding responses in retrieved data, but it still requires proper system design and validation to ensure accuracy.

4. What is the biggest challenge in RAG?

The biggest challenge in RAG is ensuring high-quality retrieval, as irrelevant or weak context can lead to inaccurate or misleading outputs.