Create a Large Language Model

In the era of artificial intelligence, large language models have become the cornerstone of numerous applications, ranging from natural language processing to generating creative content. These models, such as the GPT (Generative Pre-trained Transformer) series, have captivated the attention of researchers and developers worldwide due to their remarkable ability to understand and generate human-like text. However, the process of creating these behemoths involves a complex interplay of data, algorithms, and computational resources.

Understanding Large Language Models:

Large language models are neural network architectures trained on vast amounts of text data to understand and generate human-like text. They employ techniques from deep learning, particularly transformers, to process and generate sequences of text efficiently. The success of large language models can be attributed to their ability to learn from massive datasets, capturing intricate patterns and nuances of human language.

Key Components of Large Language Models:

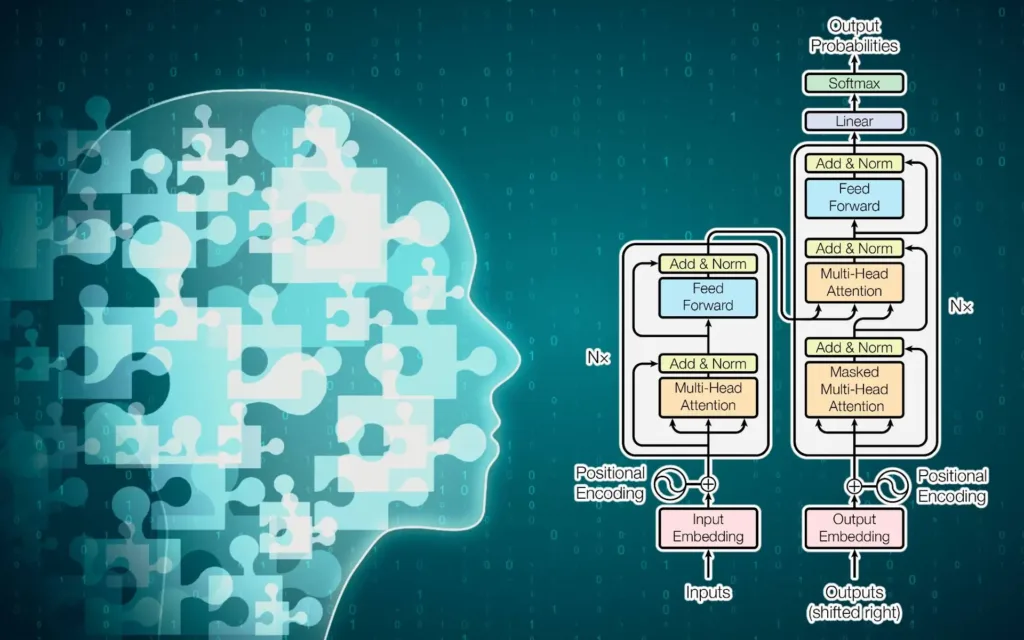

- Transformer Architecture: At the heart of large language models lies the transformer architecture. Transformers revolutionized natural language processing (NLP) with their attention mechanisms, enabling models to capture long-range dependencies in text efficiently. The transformer architecture consists of encoder and decoder layers stacked together, facilitating bidirectional understanding and generation of text.

- Pre-training and Fine-tuning: Large language models are typically pre-trained on massive text corpora using unsupervised learning techniques. During pre-training, the model learns to predict the next word in a sequence given the preceding context. This process imbues the model with a comprehensive understanding of language. Following pre-training, fine-tuning is conducted on specific downstream tasks, such as text classification or language generation, to adapt the model to a particular application.

- Data: Data is the lifeblood of large language models. These models require vast amounts of text data to learn effectively. Common sources of data include books, articles, websites, and social media posts. The diversity and quality of the training data significantly impact the performance and generalization capabilities of the model.

- Computational Resources: Building large language models demands immense computational resources, including powerful GPUs or TPUs (Tensor Processing Units) and distributed computing frameworks. Training such models often necessitate extensive hardware infrastructure and substantial time investments.

LLM Development Overview

| Stage | Key Focus | Tools & Techniques |

| Data Strategy | Collection & Ethics | Web Scraping, Diverse Corpora, Data Cleaning |

| Preparation | Pre-processing | Tokenization, Normalization, Chunking |

| Architecture | Model Selection | Transformers (GPT, BERT, T5) |

| Development | Training | Self-attention, Backpropagation, GPUs/TPUs |

| Validation | Evaluation | Perplexity, BLEU Score, Accuracy Metrics |

| Optimization | Fine-tuning | Task-specific training, Domain adaptation |

| Execution | Deployment | API Integration, Cloud Infrastructure, Web Services |

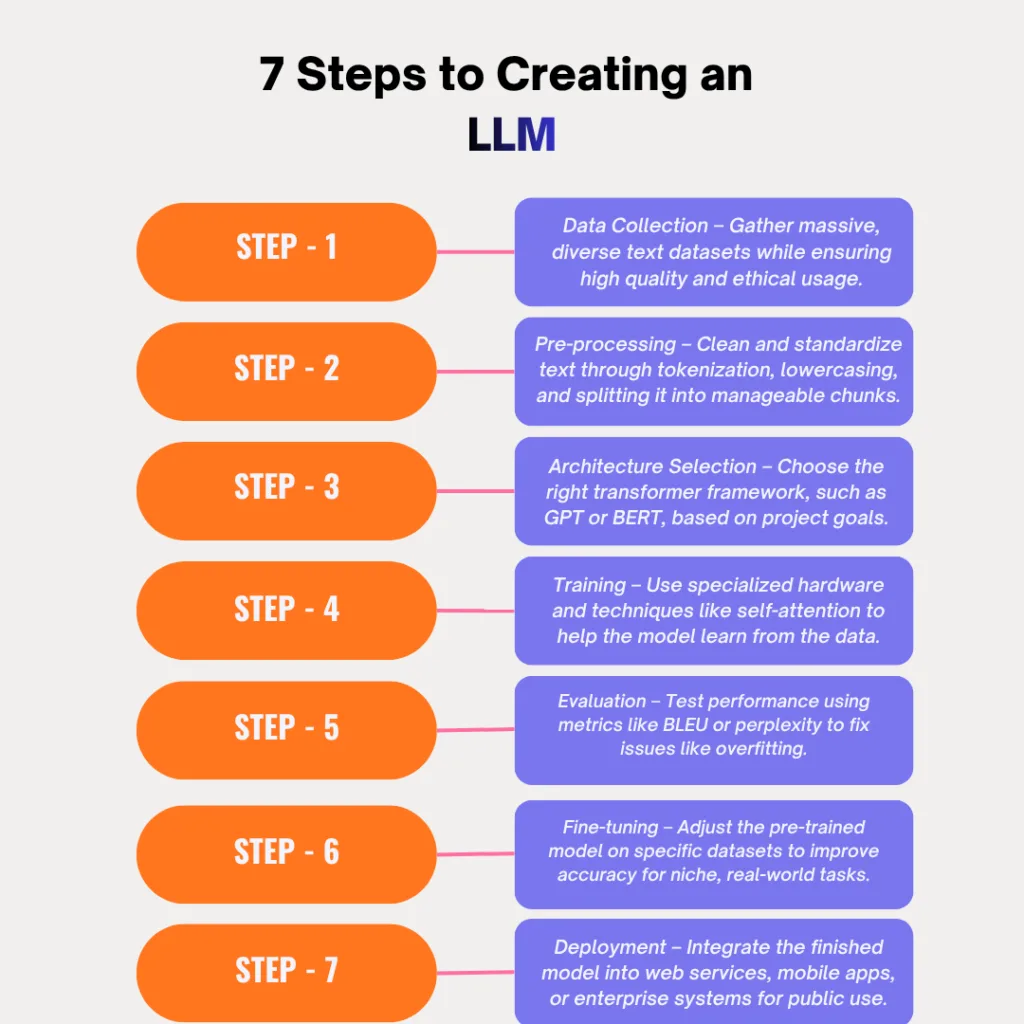

Steps to Create Large Language Models:

- Data Collection: The initial step involves gathering a diverse and extensive dataset of text. This dataset serves as the foundation for training the language model. Careful consideration must be given to data quality, relevance, and ethical considerations regarding data usage.

- Pre-processing: Once the data is collected, it undergoes pre-processing to clean and standardize the text. This involves tasks such as tokenization, lowercasing, removing special characters, and splitting the text into manageable chunks for training.

- Model Architecture Selection: Depending on the requirements and available resources, the appropriate transformer architecture is chosen for the language model. Popular choices include GPT (Generative Pre-trained Transformer) and BERT (Bidirectional Encoder Representations from Transformers).

- Training: Training a large language model is a computationally intensive process that typically occurs on specialized hardware infrastructure. The model is trained using techniques like self-attention and backpropagation, with optimization algorithms such as Adam or SGD (Stochastic Gradient Descent).

- Evaluation: Throughout the training process, the model’s performance is evaluated on validation datasets to monitor its progress and identify potential issues such as overfitting or underfitting. Evaluation metrics may include perplexity, BLEU score, or accuracy on downstream tasks.

- Fine-tuning: Once the model is pre-trained, it can be fine-tuned on specific downstream tasks by further training on task-specific datasets. Fine-tuning allows the model to adapt its learned representations to the nuances of the target task, enhancing performance.

- Deployment: After training and fine-tuning, the language model is ready for deployment in real-world applications. Deployment involves integrating the model into the target application environment, whether it’s a web service, mobile app, or enterprise system.

Read more blog : What are Large Language Models (LLMs)?

Top 7 Tips for Effective LLM Distillation

Challenges and Considerations:

Building large language models is not without its challenges and considerations:

- Ethical Considerations: Using large language models raises ethical concerns regarding data privacy, biases in the training data, and potential misuse of AI-generated content.

- Resource Intensiveness: Training large language models requires substantial computational resources, which may be prohibitive for smaller organizations or researchers with limited access to such resources.

- Model Interpretability: Understanding and interpreting the inner workings of large language models remains a significant challenge, particularly in complex, high-dimensional neural networks.

Conclusion

The creation of Large Language Models is a sophisticated journey that blends massive data acquisition with cutting-edge transformer architectures. While the process demands significant computational power and meticulous fine-tuning, the result is a transformative tool capable of bridging the gap between human communication and machine logic. By balancing technical precision with ethical data practices, developers can harness these “behemoths” to solve complex problems, automate intelligent workflows, and unlock new frontiers in generative AI. As hardware becomes more accessible and algorithms more efficient, the potential for custom LLMs to reshape industries continues to grow.

Frequently Asked Questions

What is the most critical component in creating an LLM?

Data is often considered the lifeblood of any large language model. The quality, diversity, and scale of the dataset directly determine how well the model understands linguistic nuances and generalizes across different tasks.

Why is the Transformer architecture preferred over older models?

Transformers utilize “attention mechanisms,” which allow the model to process long-range dependencies in text more efficiently than previous architectures. This enables a deeper, bidirectional understanding of context.

What is the difference between pre-training and fine-tuning?

Pre-training involves teaching the model general language patterns using massive, unlabeled datasets. Fine-tuning is the subsequent step where the model is trained on a smaller, specific dataset to excel at a particular task, like legal drafting or sentiment analysis.

How do you measure if an LLM is performing well?

Performance is typically measured using specific metrics such as Perplexity (how well the model predicts a sample) and BLEU scores (the similarity between generated text and a reference), alongside accuracy tests on downstream tasks.

What are the main challenges in building these models?

The primary hurdles include the immense computational power required (GPUs/TPUs), the high cost of infrastructure, and ethical concerns regarding biases present in the training data.