Statistics is not merely a branch of mathematics but a powerful tool that permeates almost every field of science, from economics to biology, from psychology to engineering. At the heart of many statistical concepts lies the Central Limit Theorem (CLT), a fundamental principle that underpins our understanding of random variables and their distributions.

Unveiling the Central Limit Theorem

Imagine you have a population with a distribution of any shape—it could be uniform, heavily skewed, or completely irregular. If you draw multiple random samples from this population and calculate the mean of each sample, a pattern emerges. As your sample size increases, the distribution of those sample means will naturally form a bell-shaped curve, known as the Normal Distribution.

Understanding the Mechanics

The CLT provides a predictable framework for how sample means behave. It tells us that as the sample size ($n$) increases, the sampling distribution of the mean becomes increasingly normal, regardless of the original population’s shape.

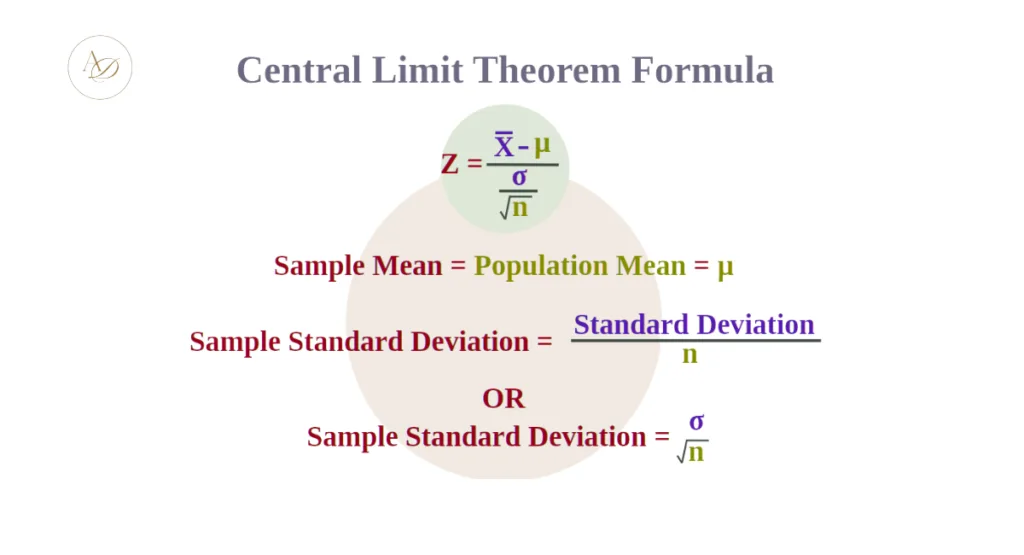

Mathematically, the Central Limit Theorem is expressed as:

Where:

- $\bar{X}$: The sample mean.

- $\mu$: The population mean.

- $\sigma$: The population standard deviation.

- $n$: The sample size.

This formula demonstrates that the sampling distribution will have a mean equal to the population mean ($\mu$), but its spread (standard deviation) will shrink by a factor of $\sqrt{n}$. This refined spread is known as the Standard Error.

CLT at a Glance: Key Components

| Feature | Description |

| Core Concept | Sample means will be normally distributed even if the population is not. |

| Mean ($\mu$) | The mean of the sample means is equal to the population mean. |

| Standard Error | Calculated as $\sigma / \sqrt{n}$; it decreases as sample size increases. |

| Magic Number | Usually $n \geq 30$ is considered sufficient for the theorem to take effect. |

Real-World Implications

The Central Limit Theorem holds profound implications for both theoretical statistics and practical applications across various domains. Here are some key implications:

- Inference and Estimation: The CLT forms the basis for many statistical inference procedures, such as hypothesis testing and confidence interval estimation. It allows us to make inferences about population parameters based on sample statistics.

- Sample Size Determination: Understanding the CLT helps researchers determine the appropriate sample size for their studies. Larger sample sizes tend to produce more reliable estimates of population parameters.

- Quality Control: In fields like manufacturing and quality control, the CLT is instrumental in analyzing and monitoring processes. It enables practitioners to assess whether variations in product quality are within acceptable limits.

- Economics and Finance: The CLT is central to risk management, asset pricing models, and financial forecasting. It allows analysts to make robust predictions about future outcomes despite the uncertainty inherent in financial markets.

- Biostatistics and Epidemiology: In healthcare and epidemiological studies, the CLT facilitates the analysis of medical data, enabling researchers to draw meaningful conclusions about the effectiveness of treatments or the spread of diseases.

Related Articles :

Limitations and Assumptions

While the Central Limit Theorem is a powerful tool, it’s essential to recognize its limitations and the assumptions underlying its applicability:

- Sample Size Requirement: The CLT assumes that the sample size is sufficiently large. While there is no strict rule for what constitutes a “sufficiently large” sample size, a commonly cited guideline is ( n [latex]\geq 30[/latex] ). However, this threshold can vary depending on the shape of the population distribution.

- Independence Assumption: The samples drawn must be independent of each other. In practical scenarios, this assumption may be violated if, for example, samples are taken from a time series data set where observations are correlated over time.

- Finite Variance: The CLT requires that the population from which the samples are drawn have a finite variance. In cases where the population variance is infinite, the CLT may not hold.

Conclusion

In conclusion, the Central Limit Theorem stands as a cornerstone of modern statistics, providing a powerful framework for understanding the behavior of sample means and their distributions. Its implications extend far beyond the realm of theoretical statistics, shaping our ability to make informed decisions and draw meaningful conclusions from data across diverse fields. By grasping the essence of the CLT and its underlying principles, statisticians, researchers, and practitioners unlock a world of analytical possibilities, empowering them to navigate the complexities of uncertainty with confidence and precision.

Frequently Asked Questions (FAQ)

1. Why is the Central Limit Theorem so important?

It allows statisticians to use the properties of the normal distribution to make inferences about a population, even when the population distribution is unknown or non-normal.

2. Does the population have to be normal for CLT to work?

No. That is the “magic” of the CLT—the population can be any shape. Only the distribution of the sample means needs to be normal.

3. What happens if my sample size is less than 30?

If the sample size is small, the CLT might not apply unless the underlying population is already normally distributed. For non-normal populations with small $n$, the results may be biased.