Beta testing in SaaS is a crucial phase where real users interact with your product before its official release. Gathering meaningful feedback during this stage helps companies refine features, fix bugs, and enhance usability.

Without structured feedback collection, beta testing can result in noise, conflicting opinions, or missed opportunities to address core issues.

This article explains how SaaS businesses can design effective beta testing programs, engage the right users, and implement feedback loops that lead to measurable product improvements.

By focusing on actionable insights and clear communication, beta testing can become a powerful tool for building better products and stronger customer relationships.

Table of Contents

Why Beta Testing in SaaS Is Essential for Product Development

Beta testing in SaaS plays a vital role in ensuring that new features and improvements meet user expectations before a full-scale launch. It allows companies to identify real-world issues that might not appear during internal testing. For example, a feature that works smoothly in a controlled environment may behave differently when used by customers with various devices, network speeds, or workflows.

Beyond uncovering bugs, beta testing helps refine user experience by collecting feedback on navigation, interface design, and usability. This helps developers prioritize adjustments that directly impact customer satisfaction. Early feedback also helps in testing new functionalities, ensuring that product changes align with user needs.

Another key benefit is risk reduction. Launching a product with unresolved issues can lead to customer dissatisfaction, negative reviews, and lost trust. Beta testing allows teams to address potential pitfalls before they affect a wider audience. It also gives stakeholders a better understanding of product readiness, which is essential for planning marketing, support, and scalability efforts.

Overall, beta testing in SaaS is a proactive step that strengthens product quality, builds customer confidence, and increases the likelihood of a successful launch.

How to Structure a Successful Beta Testing Program

A well-structured beta testing program ensures that feedback is useful, targeted, and aligned with business goals. Below are the key elements to building an effective program.

- Selecting the Right Beta Testers

Choosing testers who represent diverse user personas helps ensure that feedback covers different workflows, devices, and usage patterns. Including both power users and beginners offers a balanced view of potential pain points and areas for improvement.

- Defining Clear Testing Goals

Setting specific, measurable objectives helps testers understand what to focus on. Whether it’s testing performance, exploring a new feature, or verifying usability, clear goals prevent vague feedback and ensure that insights are actionable.

- Creating Feedback Channels

Providing multiple avenues for feedback collection makes it easier for testers to share their experience. Surveys, interviews, and in-app feedback forms allow users to communicate problems or suggestions as they occur. Structured forms can guide them to report technical issues more effectively.

- Encouraging Continuous Engagement

Keeping testers motivated throughout the process is essential. Regular updates, recognition of top contributors, and transparent communication on how feedback is being used can maintain enthusiasm. Engaged testers are more likely to provide detailed and thoughtful input.

A structured approach helps turn beta testing into a powerful tool for product improvement and customer satisfaction.

Key Strategies for Collecting Actionable Feedback

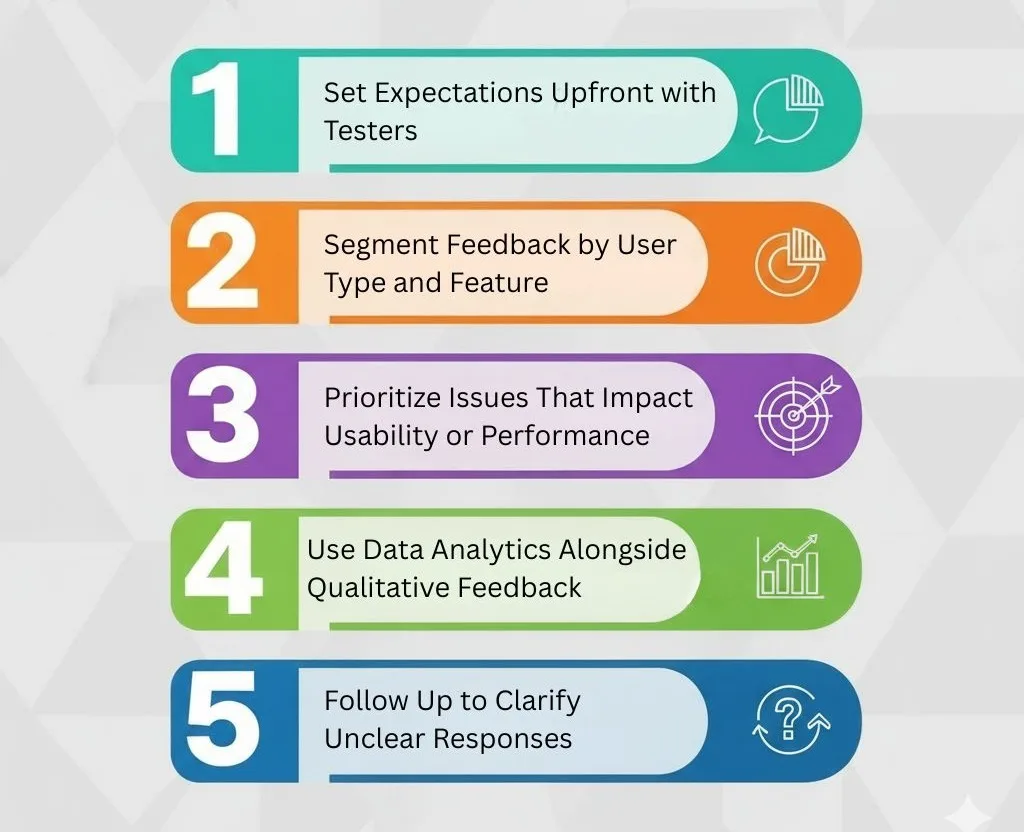

Collecting actionable feedback during beta testing in SaaS requires a structured approach. Here are key strategies that help turn user input into meaningful insights:

- Set Expectations Upfront with Testers

Clearly communicate the goals of the beta program, what types of feedback are most valuable, and the timeline for participation. This ensures that beta testers understand their role and provide targeted, useful user feedback.

- Segment Feedback by User Type and Feature

Categorize responses according to user personas and the specific features being tested. This allows teams to identify trends and prioritize improvements effectively, improving overall customer experience.

- Prioritize Issues That Impact Usability or Performance

Focus on feedback that highlights critical functionality problems or performance bottlenecks. This approach ensures that product improvement efforts address the most impactful areas first.

- Use Data Analytics Alongside Qualitative Feedback

Combine quantitative data, like usage metrics or error logs, with qualitative input from testers. This dual approach helps teams validate insights and make data-driven decisions about feature testing and enhancements.

- Follow Up to Clarify Unclear Responses

Engage with testers to clarify ambiguous feedback. This strengthens the accuracy of feedback collection strategies and ensures actionable recommendations for product refinements.

Implementing these strategies helps SaaS teams extract maximum value from beta testing programs, transforming raw feedback into actionable improvements.

Common Challenges in Beta Testing and How to Overcome Them

Even well-structured beta testing programs face obstacles. One major issue is irrelevant feedback, where testers focus on minor preferences rather than critical functionality. Clear instructions and goal-setting can help minimize this.

Low participation rates are another challenge. Engaging beta testers through incentives, recognition, or regular updates can maintain consistent involvement throughout the program.

Conflicting opinions may arise when different testers have opposing views on features or workflows. Segmenting feedback by user type and usage context helps teams make balanced decisions without over-prioritizing a single viewpoint.

Finally, managing feature requests without scope creep is critical. Product teams must distinguish between essential fixes for launch and nice-to-have enhancements that can be scheduled for later releases. Documenting all suggestions and maintaining transparent communication ensures testers feel heard without overwhelming development teams.

By anticipating these challenges and implementing proactive solutions, SaaS companies can maximize the effectiveness of their beta programs and enhance customer experience.

How Feedback-Driven Beta Testing Shapes SaaS Success

The future of SaaS product development increasingly relies on continuous beta testing cycles and structured feedback collection strategies. Companies are moving toward iterative testing, where user feedback guides both small updates and major feature rollouts.

Feedback-driven programs help maintain agility, allowing product teams to respond quickly to market demands and emerging user needs. Advanced analytics, AI-powered monitoring, and in-app feedback tools are enabling teams to collect more accurate insights with less friction.

This approach not only improves product improvement but also strengthens customer experience, as users feel their input directly influences the product they rely on. Over time, this fosters trust, increases retention, and encourages advocacy.

As SaaS companies expand globally, leveraging structured beta programs and actionable feedback loops will become a key differentiator. Businesses that embrace this strategy are better positioned to deliver user-centric solutions, minimize launch risks, and sustain long-term growth in competitive markets.

FAQS

How do I find the right beta testers, not just any users?

Don’t just open the doors to everyone. Target users who represent your ideal customer profile (ICP). Recruit from your waitlist, existing user base (for new features), or communities where your potential users gather. The goal is quality feedback from your target market, not a large quantity of random opinions.

What’s the best way to structure questions to get actionable feedback, not just “I like it” or “It sucks”?

Avoid vague questions. Use open-ended questions that prompt detailed responses. Instead of “Do you like the feature?” ask:

“Walk me through the steps you took to complete [Task X].”

“What was the biggest obstacle you faced when trying to achieve [Goal Y]?”

“If you could change one thing about this workflow, what would it be and why?”

We’re getting a lot of feedback. How do we prioritize what to act on?

High Impact / High Frequency: Critical bugs or missing features that affect many users. Fix these first.

High Impact / Low Frequency: “Power user” feature requests or edge-case bugs. Schedule these.

Low Impact / High Frequency: Small UI annoyances or “nice-to-haves.” Address these in batches.

Low Impact / Low Frequency: Acknowledge, but likely deprioritize.