One rule is universally applicable in the field of machine learning: the more high-quality data you have, the better your models will work. Large-scale data collection and labeling are costly, time-consuming, and occasionally impossible in real-world situations. This is when data augmentation techniques come in like a superhero.

Without collecting more data, you can use data augmentation to expand the size and diversity of your collection.

Why Use Data Augmentation and What Is It?

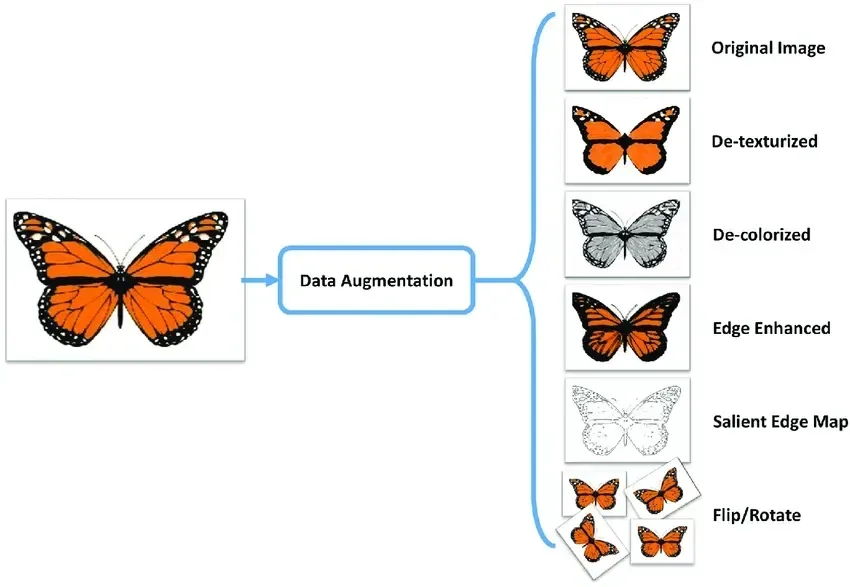

In order to increase a dataset’s quantity and diversity, a machine learning and deep learning technique called data augmentation is used, which creates altered versions of already-existing data. Imagine it as producing more training data without really collecting more.

Before we discuss specific techniques, let’s start by discussing the significance of data augmentation.

- Avoids Overfitting: When models are trained on small datasets, they often fail to recognize patterns and instead recall the input data. Augmentation helps prevent this by the introduction of variation.

- Enhances Generalization: When applied to unknown data, a model that has been trained on a wider variety of data performs better.

- Uses Limited Resources: While it can be challenging to obtain more data, augmentation is a smart way to make the most of the data you already have.

Data augmentation can be thought of as extending the education of your model without putting it back in school. This is very useful when you want a machine learning model that is precise and efficient but has less data.

Let’s examine some of the best data augmentation methods presently in use for text, images, and other machine learning applications.

Data Augmentation Techniques at a Glance

| Category | Technique | How it Works | Best For… |

| Image | Geometric Transforms | Flipping, rotating, or cropping the image. | Object detection, facial recognition. |

| Image | Color Jittering | Tweaking brightness, contrast, and saturation. | Outdoor scenes with varying light. |

| Text | Back Translation | Translating to another language and back. | Sentiment analysis, chatbots. |

| Text | Synonym Swap | Replacing words with their nearest meanings. | Expanding small text datasets. |

| Synthetic | GANs | AI “Generating” brand new, realistic data. | Medical imaging, autonomous driving. |

| General | Noise Injection | Adding “fuzz” or random data points. | Improving model robustness/stability. |

Core Image Augmentation Techniques

Image augmentation is the secret sauce for robust computer vision models. By artificially expanding your dataset, you teach your model to ignore “noise” and focus on the actual object features.

- Flipping & Rotation (Orientation Invariance): By flipping images horizontally/vertically or rotating them by specific degrees, you ensure the model recognizes an object regardless of its position in the frame.

- Best for: General object detection where the “up” direction isn’t fixed.

- Cropping & Scaling (Spatial Robustness): Randomly cropping sections or zooming into an image forces the model to learn features from partial views. This prevents the model from relying solely on an object’s position in the center of a photo.

- Noise Injection (Pixel-Level Resilience): Adding subtle “salt and pepper” or Gaussian noise mimics real-world sensor imperfections. This helps the model stay accurate even when processing low-quality or grainy footage.

- Color & Brightness Jitter (Lighting Adaptability): Modifying brightness, contrast, and saturation prepares your model for varying environmental conditions—from harsh sunlight to low-light nighttime environments.

- Random Erasing (Occlusion Handling): This involves masking random patches of the image with solid blocks or noise. It’s a “stress test” that forces the model to identify an object even when it is partially hidden behind something else.

PyTorch, OpenCV, and TensorFlow are some of the libraries that help in image augmentation.

Also Read – 10 Best Practices for Fine-Tuning AI Models

Text Data Augmentation: Increasing the Power of Word

Because language has structure and rules, text augmentation is a little more challenging than image augmentation. However, it is still quite practical and advantageous, especially for natural language processing (NLP) applications.

Here are some techniques for enhancing textual data:

- Using Synonyms: Use synonyms for words to create new phrases that have the same meaning.

- Random Insertion or Deletion: Random phrases can be inserted or deleted to mimic input variances.

- Back Translation: The process of translating a sentence into another language and then back to the original is known as back translation. This often results in a statement that has the same meaning but a different structure.

- Changing Word Order: By rearranging the words in a sentence while keeping the correct grammar, you can produce diversity.

- Using Language Models: Programs like GPT or BERT can be used to produce or rewrite similar statements.

Applications such as sentiment analysis, chatbot training, and spam detection benefit from text augmentation.

GANs: Generating New Data from Scratch

One of the most interesting developments in data augmentation is the use of Generative Adversarial Networks (GANs). It is possible for deep learning models to generate entirely new data that closely resembles your training data.

There are two GAN models available:

- The generator produces data that is fake.

- The discriminator searches for indications of real or false data.

Over the course of training, both models get better, and the generator starts to provide data that is remarkably realistic. GANs are especially good at producing visuals. GANs can generate human features, artwork, or even handwritten numbers that are indistinguishable from real data.

GANs enable the quick creation of large, high-quality datasets in domains where labeled data is expensive and scarce, such as autonomous driving and medical imaging.

Avoiding Overfitting by Using Augmented Data

Overfitting occurs when a model performs well on training data but badly on new, untested data. It’s comparable to a student memorizing answers without understanding the subject matter. Data augmentation is one of the best ways to prevent overfitting. By giving the model slightly different copies of the same data, you may train it to recognize general patterns rather than memorize exact data.

This makes your models more dependable and improves their performance in real-world situations.

The Power of Dataset Size

In machine learning, size is important. The more diverse your dataset is, the better your model will learn and generalize.

Instead of spending time and money collecting more raw data, you may use data augmentation to increase the effective size of your dataset.

For instance, you can create 10,000 variations if you apply 10 different augmentation techniques on 1,000 photographs. That’s 10 times as much training material from the same initial data!

The same goes for text and audio. Augmentation can help you make more sense of your limited data than you thought.

Conclusion

For machine learning experts, data augmentation is more than simply a trick—it’s a foundational strategy. Augmentation enables you to extract more information from your current dataset, whether it be text, photos, or even audio. It benefits you:

- Avoid overfitting

- Boost the precision of the model

- Save resources and time.

- Increase the data’s diversity and variety

From basic image data flips and rotations to more complex methods like creating synthetic data with GANs, there are many tools accessible. The best part, too? Modern machine learning libraries can be used to automatically apply several of these.

Therefore, keep in mind that you don’t always need more data; you just need to use the data you have more effectively.

By investing in data augmentation, you may improve the performance, accuracy, and outcomes of your machine learning models in the real world.

Frequently Asked Questions (FAQs)

1. Can data augmentation actually replace real data collection?

While it is incredibly powerful, it isn’t a 100% replacement. Real-world data captures unique “edge cases” that augmentation might miss. Think of augmentation as a way to “stretch” your existing data, but you still need a solid, high-quality foundation to start with.

2. Is it possible to “over-augment” a dataset?

Yes. If you apply too many transformations (e.g., rotating a “6” so much it looks like a “9”), you can introduce label noise, where the model learns the wrong information. Always ensure your transformations preserve the “essence” of the original label.

3. Does data augmentation increase training time?

Generally, yes. Since you are feeding the model more (or more complex) variations of data, the training process will take longer. However, the trade-off is a much more accurate and reliable model.

4. What are the best libraries for implementing these?

For Images: Albumentations, Torchvision, and Keras Preprocessing.

For Text: NLPAug, TextAttack, and NLTK.

For General ML: Scikit-learn.

5. How do GANs differ from standard augmentation?

Standard augmentation modifies existing data (like flipping a photo). GANs (Generative Adversarial Networks) create entirely new data points from scratch based on patterns they learned from the training set.